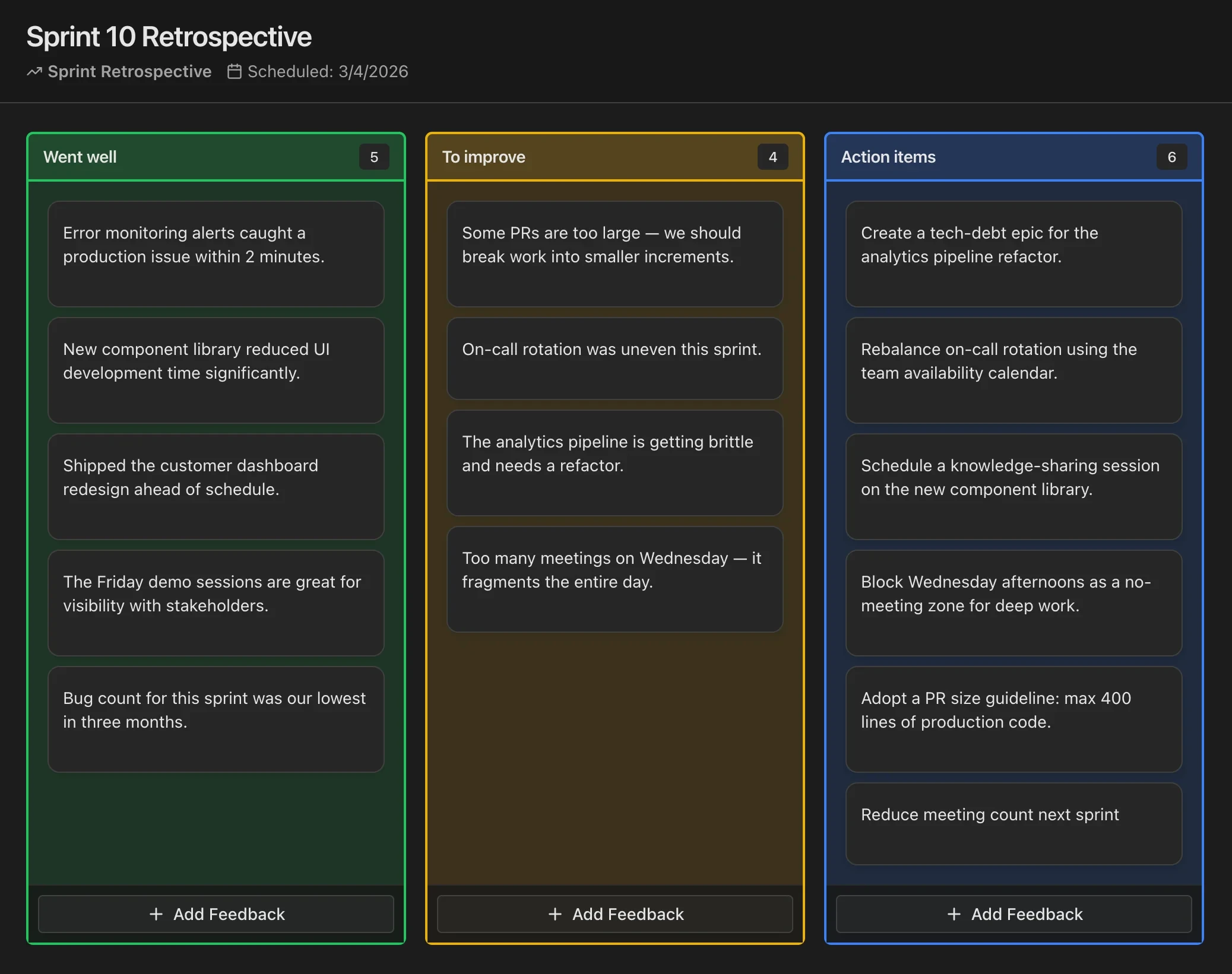

WWW (Worked - Kinda worked - Did not work)

A blunt spectrum from strong outcomes to clear failures—good for data-heavy teams.

Worked well

Practices or outcomes that reliably delivered value.

Kinda worked

Mixed results—partial wins, inconsistent execution, or unclear ownership.

Did not work

Experiments or approaches that failed or hurt flow—learn and adjust.

This board is for demo purposes only. Your responses are not saved. Close or refresh the page to clear all cards. Do not add any sensitive information.

What is WWW (Worked - Kinda worked - Did not work)?

The WWW retrospective—Worked, Kinda Worked, and Did Not Work—is a results-oriented format that categorizes practices and decisions on a three-point effectiveness spectrum. Unlike formats that separate emotions from outcomes or that use metaphors, WWW cuts straight to performance evaluation. The format gained popularity among data-driven engineering teams who prefer direct, evidence-based discussions over interpretive frameworks.

The three columns create a gradient rather than a binary. "Worked" captures clear successes—practices that reliably delivered value. "Kinda Worked" holds the ambiguous middle ground—things that had mixed results, inconsistent execution, or partial success. "Did Not Work" identifies clear failures—approaches that should be abandoned or fundamentally rethought. This gradient is what makes the format powerful, because most real-world outcomes live in the messy middle.

The "Kinda Worked" column is the defining feature. Most retrospective formats force binary classification—something either worked or it did not. But reality is rarely that clean. A deployment process might work technically but take too long. A communication practice might work for some team members but not others. By explicitly creating space for ambiguity, WWW produces more honest and nuanced assessments.

When to use WWW

WWW is ideal after a major release, a project milestone, or at the end of a quarter when the team has concrete outcomes to evaluate. It works best when there is data to reference—velocity metrics, bug counts, customer feedback, or deployment frequency. The outcome-focused nature of the format rewards teams that can point to evidence rather than relying solely on subjective impressions.

The format suits teams of four to eight people who are comfortable with direct feedback. It works well in 30 to 45 minute sessions and is particularly effective for senior or experienced teams who do not need the training wheels of metaphors or emotional frameworks. It is also excellent for post-release retrospectives where the team needs to evaluate specific technical and process decisions against actual results.

Avoid this format when the team needs to process emotions or when the emphasis should be on interpersonal dynamics. The bluntness of "Did Not Work" can feel harsh if psychological safety is low. Also avoid it when the team is too early in a new initiative to have meaningful outcome data—evaluating things that "kinda worked" requires enough runway to observe actual results.

How to facilitate WWW

Start by sharing relevant data: sprint metrics, release outcomes, customer feedback, or incident reports. Having concrete data on screen grounds the discussion in reality and prevents the retro from becoming a contest of competing narratives. Give the team five to seven minutes to write cards, encouraging them to reference specific events, decisions, or practices rather than general sentiments.

Process the columns from left to right: Worked, Kinda Worked, Did Not Work. For the Worked column, briefly acknowledge successes and identify what made them work—was it the process, the people, the timing, or luck? For Did Not Work, focus on learning rather than blame: "What did we learn from this failure that we would not have learned otherwise?"

Spend the most time on "Kinda Worked" because this is where the richest improvement opportunities live. For each item in this column, facilitate a discussion: "What would it take to move this from kinda-worked to fully-worked? Is it worth the investment, or should we cut it?" The decision to invest in or abandon partially-successful practices is often more impactful than addressing clear failures, which teams naturally move away from anyway.

Tips for getting the most out of WWW

Push for specificity and evidence in every column. "Pair programming worked" is a start, but "pair programming on the authentication module worked—we shipped with zero bugs and both developers now understand the codebase" is useful because it identifies why it worked and under what conditions. This level of detail helps the team replicate success deliberately rather than hoping it happens again.

The biggest pitfall is allowing "Kinda Worked" to become a dumping ground for things people are afraid to put in "Did Not Work." Watch for hedging language: if someone says "the new review process kinda worked" but their body language or tone says otherwise, gently probe: "If you had to bet, would you put it closer to Worked or Did Not Work?" Sometimes the honest answer is that something failed, and the team needs permission to say so.

After the retro, create a simple decision matrix for Kinda Worked items: for each one, decide to invest (move toward Worked), pivot (try a different approach), or abandon (move to Did Not Work and replace). This decisiveness prevents the team from carrying partially-effective practices indefinitely.

Variations and adaptations

For remote teams, pair the WWW format with a shared dashboard showing relevant metrics. Screen-share the data at the start and reference it throughout. Remote teams often benefit from adding a "confidence" indicator to each card—how confident is the author that their assessment is correct? Low-confidence items deserve more discussion because they may reflect information asymmetry across the team.

For async teams, share the data and the board simultaneously, giving the team 48 hours to add and discuss cards. Use threaded comments on Kinda Worked items to develop the invest/pivot/abandon recommendation before the synchronous session. The live session then becomes a decision meeting rather than a discussion meeting, which is more efficient for distributed teams.

A variation for continuous delivery teams replaces the three columns with a continuous spectrum—a horizontal line from "Did Not Work" on the left to "Worked" on the right. Team members place cards along the spectrum, which reveals a more nuanced distribution of outcomes than three discrete buckets. Another adaptation adds a fourth column: "Too Early to Tell" for practices or changes that were introduced recently and need more time before evaluation. This prevents premature judgment and encourages patience with new experiments.

Run Retrospectives in CodeKudu

CodeKudu includes dozens of retrospective board templates, anonymous feedback, AI summaries, and action items that sync to GitHub Issues, Jira, and Linear.